from transformers import TFDistilBertForSequenceClassification The small learning rate requirement will apply as well to avoid the catastrophic forgetting. Huggingface takes the 2nd approach as in Fine-tuning with native PyTorch/TensorFlow where TFDistilBertForSequenceClassification has added the custom classification layer classifier on top of the base distilbert model being trainable.

Model: "tf_distil_bert_model_1"ĭistilbert (TFDistilBertMain multiple 66362880 TFDistilBertModel is the bare base model with the name distilbert. Will describe the 1st way as part of the 3rd approach below. The optimizer used is Adam with a learning rate of 1e-4, β1= 0.9 and β2= 0.999, a weight decay of 0.01, learning rate warmup for 10,000 steps and linear decay of the learning rate after. The sequence length was limited to 128 tokens for 90% of the steps and 512 for the remaining 10%. The model was trained on 4 cloud TPUs in Pod configuration (16 TPU chips total) for one million steps with a batch size of 256. Note that the base model pre-training itself used higher learning rate. For each task, we selected the best fine-tuning learning rate (among 5e-5, 4e-5, 3e-5, and 2e-5) on the Dev set We use a batch size of 32 and fine-tune for 3 epochs over the data for all GLUE tasks. Probably this is the reason why the BERT paper used 5e-5, 4e-5, 3e-5, and 2e-5 for fine-tuning. With an aggressive learn rate of 4e-4, the training set fails to converge. Is necessary to make BERT overcome the catastrophic forgetting problem. We find that a lower learning rate, such as 2e-5, #Bert finetune how to#How to Fine-Tune BERT for Text Classification? demonstrated the 1st approach of Further Pre-training, and pointed out the learning rate is the key to avoid Catastrophic Forgetting where the pre-trained knowledge is erased during learning of new knowledge. We can use it as part of our custom model training with the base trainable (2nd) or not-trainable (3rd). Hence, the base BERT model is like half-baked which can be fully baked for the target domain (1st way). Task #2: Next Sentence Prediction (NSP).

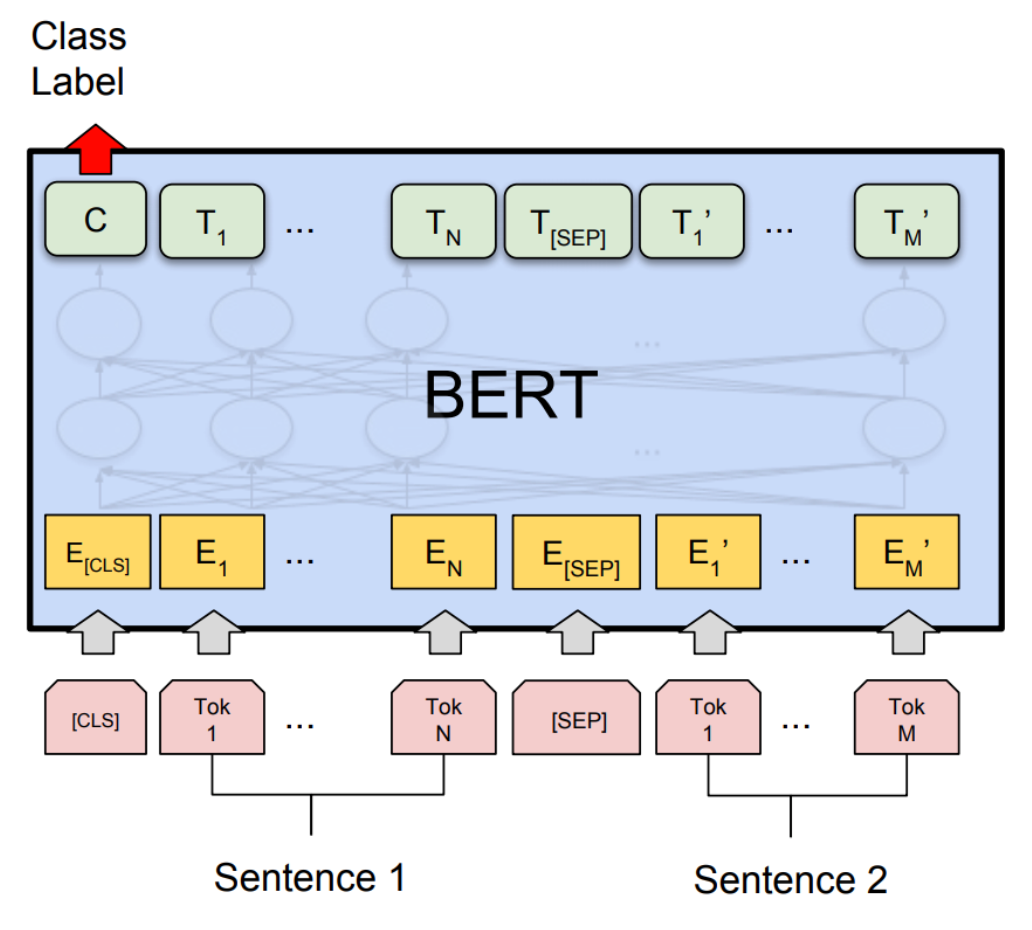

we pre-train BERT using two unsupervised tasks

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed